Posts Tagged ‘Hacking’

Speeding up multi-browser Selenium Testing using concurrency

I haven’t used Selenium for awhile, so I took some time to dig into the options to get some mainline tests running against Caliper in multiple browsers. I wanted to be able to test a variety of browsers against our staging server before pushing new releases. Eventually this could be integrated into Continuous Integration (CI) or Continuous Deployment (CD).

The state of Selenium testing for Rails is currently in flux:

- Selenium Core/IDE (integrated browser test runner) vs. Selenium RC

- Webdriver/Selenium 2.0

- Webrat and Capybara are merging

So there are multiple gems / frameworks:

- selenium-on-rails (Selenium IDE/Core test runner)

- selenium-client (Selenium RC)

- Webrat (uses selenium-client)

- Capybara (supports WebDriver / Selenium 2.0)

- and likely others…

I decided to investigate several options to determine which is the best approach for our tests.

selenium-on-rails

I originally wrote a couple example tests using the selenium-on-rails plugin. This allows you to browse to your local development web server at ‘/selenium’ and run tests in the browser using the Selenium test runner. It is simple and the most basic Selenium mode, but it obviously has limitations. It wasn’t easy to run many different browsers using this plugin, or use with Selenium-RC, and the plugin was fairly dated. This lead me to try simplest next thing, selenium-client

open '/'

assert_title 'Hosted Ruby/Rails metrics - Caliper'

verify_text_present 'Recently Generated Metrics'

click_and_wait "css=#projects a:contains('Projects')"

verify_text_present 'Browse Projects'

click_and_wait "css=#add-project a:contains('Add Project')"

verify_text_present 'Add Project'

type 'repo','git://github.com/sinatra/sinatra.git'

click_and_wait "css=#submit-project"

verify_text_present 'sinatra/sinatra'

wait_for_element_present "css=#hotspots-summary"

verify_text_present 'View full Hot Spots report'

selenium-client

I quickly converted my selenium-on-rails tests to selenium-client tests, with some small modifications. To run tests using selenium-client, you need to run a selenium-RC server. I setup Sauce RC on my machine and was ready to go. I configured the tests to run locally on a single browser (Firefox). Once that was working I wanted to run the same tests in multiple browsers. I found that it was easy to dynamically create a test for each browser type and run them using selenium-RC, but that it was increadly slow, since tests run one after another and not concurrently. Also, you need to install each browser (plus multiple versions) on your machine. This led me to use Sauce Labs’ OnDemand.

browser.open '/'

assert_equal 'Hosted Ruby/Rails metrics - Caliper', browser.title

assert browser.text?('Recently Generated Metrics')

browser.click "css=#projects a:contains('Projects')", :wait_for => :page

assert browser.text?('Browse Projects')

browser.click "css=#add-project a:contains('Add Project')", :wait_for => :page

assert browser.text?('Add Project')

browser.type 'repo','git://github.com/sinatra/sinatra.git'

browser.click "css=#submit-project", :wait_for => :page

assert browser.text?('sinatra/sinatra')

browser.wait_for_element "css=#hotspots-summary"

assert browser.text?('View full Hot Spots report')

Using Selenium-RC and Sauce Labs Concurrently

Running on all the browsers Sauce Labs offers (12) took 910 seconds. Which is cool, but way too slow, and since I am just running the same tests over in different browsers, I decided that it should be done concurrently. If you are running your own Selenium-RC server this will slow down a lot as your machine has to start and run all of the various browsers, so this approach isn’t recommended on your own Selenium-RC setup, unless you configure Selenium-Grid. If you are using Sauce Labs, the tests run concurrently with no slow down. After switching to concurrently running my Selenium tests, run time went down to 70 seconds.

My main goal was to make it easy to write pretty standard tests a single time, but be able to change the number of browsers I ran them on and the server I targeted. One approach that has been offered explains how to setup Cucumber to run Selenium tests against multiple browsers. This basically runs the rake task over and over for each browser environment.

Althought this works, I also wanted to run all my tests concurrently. One option would be to concurrently run all of the Rake tasks and join the results. Joining the results is difficult to do cleanly or you end up outputting the full rake test output once per browser (ugly when running 12 times). I took a slightly different approach which just wraps any Selenium-based test in a run_in_browsers block. Depending on the options set, the code can run a single browser against your locally hosted application, or many browsers against a staging or production server. Then simply create a separate Rake task for each of the configurations you expect to use (against local selenium-RC and Sauce Labs on demand).

I am pretty happy with the solution I have for now. It is simple and fast and gives another layer of assurances that Caliper is running as expected. Adding additional tests is simple, as is integrating the solution into our CI stack. There are likely many ways to solve the concurrent selenium testing problem, but I was able to go from no Selenium tests to a fast multi-browser solution in about a day, which works for me. There are downsides to the approach, the error output isn’t exactly the same when run concurrently, but it is pretty close. As opposed to seeing multiple errors for each test, you get a single error per test which includes the details about what browsers the error occurred on.

In the future I would recommend closely watching Webrat and Capybara which I would likely use to drive the Selenium tests. I think the eventual merge will lead to the best solution in terms of flexibility. At the moment Capybara doesn’t support selenium-RC, and the tests I originally wrote didn’t convert to the Webrat API as easily as directly to selenium-client (although setting up Webrat to use Selenium looks pretty simple). The example code given could likely be adapted easily to work with existing Webrat tests.

namespace :test do

namespace :selenium do

desc "selenium against staging server"

task :staging do

exec "bash -c 'SELENIUM_BROWSERS=all SELENIUM_RC_URL=saucelabs.com SELENIUM_URL=http://caliper-staging.heroku.com/ ruby test/acceptance/walkthrough.rb'"

end

desc "selenium against local server"

task :local do

exec "bash -c 'SELENIUM_BROWSERS=one SELENIUM_RC_URL=localhost SELENIUM_URL=http://localhost:3000/ ruby test/acceptance/walkthrough.rb'"

end

end

end

require "rubygems"

require "test/unit"

gem "selenium-client", ">=1.2.16"

require "selenium/client"

require 'threadify'

class ExampleTest 1

errors = []

browsers.threadify(browsers.length) do |browser_spec|

begin

run_browser(browser_spec, block)

rescue => error

type = browser_spec.match(/browser\": \"(.*)\", /)[1]

version = browser_spec.match(/browser-version\": \"(.*)\",/)[1]

errors < type, :version => version, :error => error}

end

end

message = ""

errors.each_with_index do |error, index|

message +="\t[#{index+1}]: #{error[:error].message} occurred in #{error[:browser]}, version #{error[:version]}\n"

end

assert_equal 0, errors.length, "Expected zero failures or errors, but got #{errors.length}\n #{message}"

else

run_browser(browsers[0], block)

end

end

def run_browser(browser_spec, block)

browser = Selenium::Client::Driver.new(

:host => selenium_rc_url,

:port => 4444,

:browser => browser_spec,

:url => test_url,

:timeout_in_second => 120)

browser.start_new_browser_session

begin

block.call(browser)

ensure

browser.close_current_browser_session

end

end

def test_basic_walkthrough

run_in_all_browsers do |browser|

browser.open '/'

assert_equal 'Hosted Ruby/Rails metrics - Caliper', browser.title

assert browser.text?('Recently Generated Metrics')

browser.click "css=#projects a:contains('Projects')", :wait_for => :page

assert browser.text?('Browse Projects')

browser.click "css=#add-project a:contains('Add Project')", :wait_for => :page

assert browser.text?('Add Project')

browser.type 'repo','git://github.com/sinatra/sinatra.git'

browser.click "css=#submit-project", :wait_for => :page

assert browser.text?('sinatra/sinatra')

browser.wait_for_element "css=#hotspots-summary"

assert browser.text?('View full Hot Spots report')

end

end

def test_generate_new_metrics

run_in_all_browsers do |browser|

browser.open '/'

browser.click "css=#add-project a:contains('Add Project')", :wait_for => :page

assert browser.text?('Add Project')

browser.type 'repo','git://github.com/sinatra/sinatra.git'

browser.click "css=#submit-project", :wait_for => :page

assert browser.text?('sinatra/sinatra')

browser.click "css=#fetch"

browser.wait_for_page

assert browser.text?('sinatra/sinatra')

end

end

end

Improving Code using Metric_fu

Often, when people see code metrics they think, “that is interesting, I don’t know what to do with it.” I think metrics are great, but when you can really use them to improve your project’s code, that makes them even more valuable. metric_fu provides a bunch of great metric information, which can be very useful. But if you don’t know what parts of it are actionable it’s merely interesting instead of useful.

One thing when looking at code metrics to keep in mind is that a single metric may not be as interesting. If you look at a metric trends over time it might help give you more meaningful information. Showing this trending information is one of our goals with Caliper. Metrics can be your friend watching over the project and like having a second set of eyes on how the code is progressing, alerting you to problem areas before they get out of control. Working with code over time, it can be hard to keep everything in your head (I know I can’t). As the size of the code base increases it can be difficult to keep track of all the places where duplication or complexity is building up in the code. Addressing the problem areas as they are revealed by code metrics can keep them from getting out of hand, making future additions to the code easier.

I want to show how metrics can drive changes and improve the code base by working on a real project. I figured there was no better place to look than pointing metric_fu at our own devver.net website source and fixing up some of the most notable problem areas. We have had our backend code under metric_fu for awhile, but hadn’t been following the metrics on our Merb code. This, along with some spiked features that ended up turning into Caliper, led to some areas getting a little out of control.

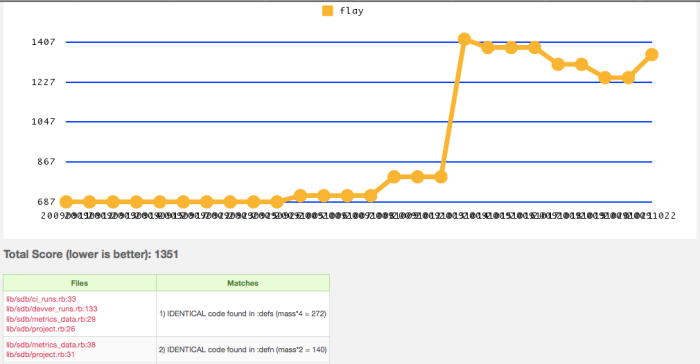

When going through metric_fu the first thing I wanted to start to work on was making the code a bit more DRY. The team and I were starting to notice a bit more duplication in the code than we liked. I brought up the Flay results for code duplication and found that four databases models shared some of the same methods.

Flay highlighted the duplication. Since we are planning on making some changes to how we handle timestamps soon, it seemed like a good place to start cleaning up. Below are the methods that existed in all four models. A third method ‘update_time’ existed in two of the four models.

def self.pad_num(number, max_digits = 15)

"%%0%di" % max_digits % number.to_i

end

def get_time

Time.at(self.time.to_i)

end

Nearly all of our DB tables store time in a way that can be sorted with SimpleDB queries. We wanted to change our time to be stored as UTC in the ISO 8601 format. Before changing to the ISO format, it was easy to pull these methods into a helper module and include it in all the database models.

module TimeHelper

module ClassMethods

def pad_num(number, max_digits = 15)

"%%0%di" % max_digits % number.to_i

end

end

def get_time

Time.at(self.time.to_i)

end

def update_time

self.time = self.class.pad_num(Time.now.to_i)

end

end

Besides reducing the duplication across the DB models, it also made it much easier to include another time method update_time, which was in two of the DB models. This consolidated all the DB time logic into one file, so changing the time format to UTC ISO 8601 will be a snap. While this is a trivial example of a obvious refactoring it is easy to see how helper methods can often end up duplicated across classes. Flay can come in really handy at pointing out duplication that over time that can occur.

Flog gives a score showing how complex the measured code is. The higher the score the greater the complexity. The more complex code is the harder it is to read and it likely contains higher defect density. After removing some duplication from the DB models I found our worst database model based on Flog scores was our MetricsData model. It included an incredibly bad high flog score of 149 for a single method.

| File | Total score | Methods | Average score | Highest score |

|---|---|---|---|---|

| /lib/sdb/metrics_data.rb | 327 | 12 | 27 | 149 |

The method in question was extract_data_from_yaml, and after a little refactoring it was easy to make extract_data_from_yaml drop from a score of 149 to a series of smaller methods with the largest score being extract_flog_data! (33.6). The method was doing too much work and was frequently being changed. The method was extracting the data from 6 different metric tools and creating summary of the data.

The method went from a sprawling 42 lines of code to a cleaner and smaller method of 10 lines and a collection of helper methods that look something like the below code:

def self.extract_data_from_yaml(yml_metrics_data)

metrics_data = Hash.new {|hash, key| hash[key] = {}}

extract_flog_data!(metrics_data, yml_metrics_data)

extract_flay_data!(metrics_data, yml_metrics_data)

extract_reek_data!(metrics_data, yml_metrics_data)

extract_roodi_data!(metrics_data, yml_metrics_data)

extract_saikuro_data!(metrics_data, yml_metrics_data)

extract_churn_data!(metrics_data, yml_metrics_data)

metrics_data

end

def self.extract_flog_data!(metrics_data, yml_metrics_data)

metrics_data[:flog][:description] = 'measures code complexity'

metrics_data[:flog]["average method score"] = Devver::Maybe(yml_metrics_data)[:flog][:average].value(N_A)

metrics_data[:flog]["total score"] = Devver::Maybe(yml_metrics_data)[:flog][:total].value(N_A)

metrics_data[:flog]["worst file"] = Devver::Maybe(yml_metrics_data)[:flog][:pages].first[:path].fmap {|x| Pathname.new(x)}.value(N_A)

end

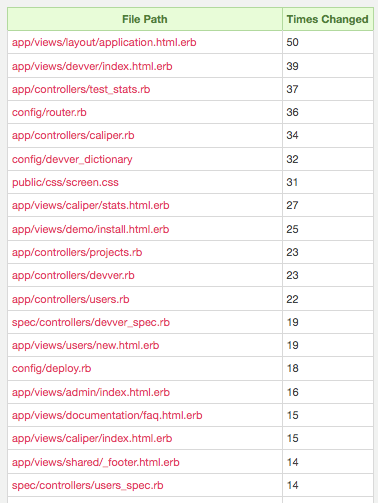

Churn gives you an idea of files that might be in need of a refactoring. Often if a file is changing a lot it means that the code is doing too much, and would be more stable and reliable if broken up into smaller components. Looking through our churn results, it looks like we might need another layout to accommodate some of the different styles on the site. Another thing that jumps out is that both the TestStats and Caliper controller have fairly high churn. The Caliper controller has been growing fairly large as it has been doing double duty for user facing features and admin features, which should be split up. TestStats is admin controller code that also has been growing in size and should be split up into more isolated cases.

Churn gave me an idea of where might be worth focusing my effort. Diving in to the other metrics made it clear that the Caliper controller needed some attention.

The Flog, Reek, and Roodi Scores for Caliper Controller:

| File | Total score | Methods | Average score | Highest score |

|---|---|---|---|---|

| /app/controllers/caliper.rb | 214 | 14 | 15 | 42 |

Roodi Report app/controllers/caliper.rb:34 - Method name "index" has a cyclomatic complexity is 14. It should be 8 or less. app/controllers/caliper.rb:38 - Rescue block should not be empty. app/controllers/caliper.rb:51 - Rescue block should not be empty. app/controllers/caliper.rb:77 - Rescue block should not be empty. app/controllers/caliper.rb:113 - Rescue block should not be empty. app/controllers/caliper.rb:149 - Rescue block should not be empty. app/controllers/caliper.rb:34 - Method name "index" has 36 lines. It should have 20 or less. Found 7 errors.

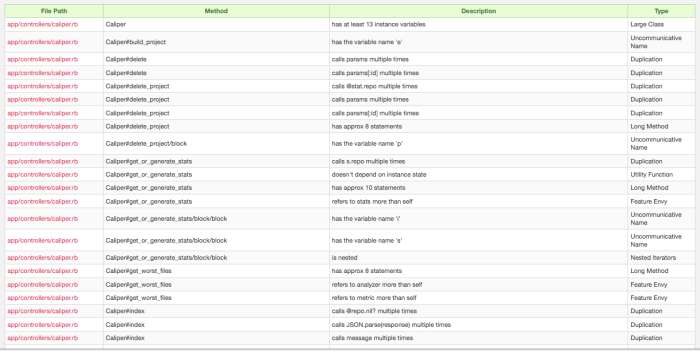

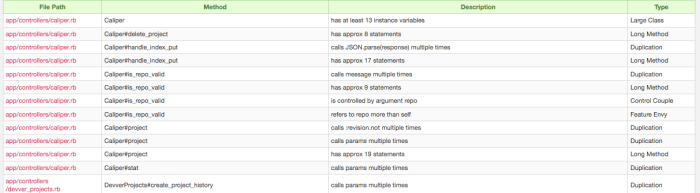

Roodi and Reek both tell you about design and readability problems in your code. The screenshot of our Reek ‘code smells’ in the Caliper controller should show how it had gotten out of hand. The code smells filled an entire browser page! Roodi similarly had many complaints about the Caliper controller. Flog was also showing the file was getting a bit more complex than it should be. After picking off some of the worst Roodi and Reek complaints and splitting up methods with high Flog scores, the code had become easily readable and understandable at a glance. In fact I nearly cut the Reek complaints in half for the controller.

Refactoring one controller, which had been quickly hacked together and growing out of control, brought it from a dizzying 203 LOC to 138 LOC. The metrics drove me to refactor long methods (52 LOC => 3 methods the largest being 23 LOC), rename unclear variable names (s => stat, p => project), move some helpers methods out of the controller into the helper class where they belong. Yes, all these refactorings and good code designs can be done without metrics, but it can be easy to overlook bad code smells when they start small, metrics can give you an early warning that a section of code is becoming unmanageable and likely prone to higher defect rates. The smaller file was a huge improvement in terms of cyclomatic complexity, LOC, code duplication, and more importantly, readability.

Obviously I think code metrics are cool, and that your projects can be improved by paying attention to them as part of the development lifecycle. I wrote about metric_fu so that anyone can try these metrics out on their projects. I think metric_fu is awesome, and my interest in Ruby tools is part of what drove us to build Caliper, which is really the easiest way try out metrics for your project. Currently, you can think of it as hosted metric_fu, but we are hoping to go even further and make the metrics clearly actionable to users.

In the end, yep, this is a bit of a plug for a product I helped build, but it is really because I think code metrics can be a great tool to help anyone with their development. So submit your repo in and give Caliper hosted Ruby metrics a shot. We are trying to make metrics more actionable and useful for all Ruby developers out, so we would love to here from you with any ideas about how to improve Caliper, please contact us.

You must be logged in to post a comment.